注意

转到结尾 下载完整的示例代码。或者通过JupyterLite或Binder在浏览器中运行此示例。

使用代价复杂度剪枝进行后期剪枝决策树#

DecisionTreeClassifier 提供了诸如min_samples_leaf和max_depth之类的参数来防止树过拟合。代价复杂度剪枝提供了另一个控制树大小的选项。在DecisionTreeClassifier中,此剪枝技术由代价复杂度参数ccp_alpha参数化。ccp_alpha的值越大,剪枝的节点数就越多。在这里,我们只展示ccp_alpha对正则化树的影响,以及如何根据验证分数选择ccp_alpha。

另请参见最小代价复杂度剪枝,了解有关剪枝的详细信息。

# Authors: The scikit-learn developers

# SPDX-License-Identifier: BSD-3-Clause

import matplotlib.pyplot as plt

from sklearn.datasets import load_breast_cancer

from sklearn.model_selection import train_test_split

from sklearn.tree import DecisionTreeClassifier

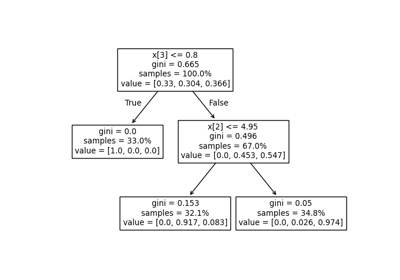

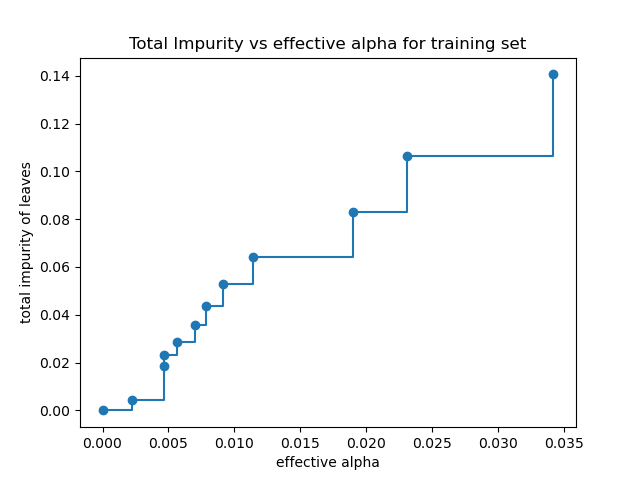

叶子的总杂质与修剪树的有效alpha值#

最小代价复杂度剪枝递归地找到具有“最弱链接”的节点。“最弱链接”的特征在于有效的alpha值,其中具有最小有效alpha值的节点首先被剪枝。为了了解哪些ccp_alpha值可能合适,scikit-learn提供了DecisionTreeClassifier.cost_complexity_pruning_path,它返回剪枝过程每个步骤的有效alpha值和相应的总叶杂质。随着alpha的增加,更多的树被剪枝,这增加了其叶子的总杂质。

X, y = load_breast_cancer(return_X_y=True)

X_train, X_test, y_train, y_test = train_test_split(X, y, random_state=0)

clf = DecisionTreeClassifier(random_state=0)

path = clf.cost_complexity_pruning_path(X_train, y_train)

ccp_alphas, impurities = path.ccp_alphas, path.impurities

在下图中,最大有效alpha值被移除,因为它只有一个节点的平凡树。

fig, ax = plt.subplots()

ax.plot(ccp_alphas[:-1], impurities[:-1], marker="o", drawstyle="steps-post")

ax.set_xlabel("effective alpha")

ax.set_ylabel("total impurity of leaves")

ax.set_title("Total Impurity vs effective alpha for training set")

Text(0.5, 1.0, 'Total Impurity vs effective alpha for training set')

接下来,我们使用有效的alpha值训练决策树。ccp_alphas中的最后一个值是剪枝整棵树的alpha值,留下只有一个节点的树clfs[-1]。

clfs = []

for ccp_alpha in ccp_alphas:

clf = DecisionTreeClassifier(random_state=0, ccp_alpha=ccp_alpha)

clf.fit(X_train, y_train)

clfs.append(clf)

print(

"Number of nodes in the last tree is: {} with ccp_alpha: {}".format(

clfs[-1].tree_.node_count, ccp_alphas[-1]

)

)

Number of nodes in the last tree is: 1 with ccp_alpha: 0.3272984419327777

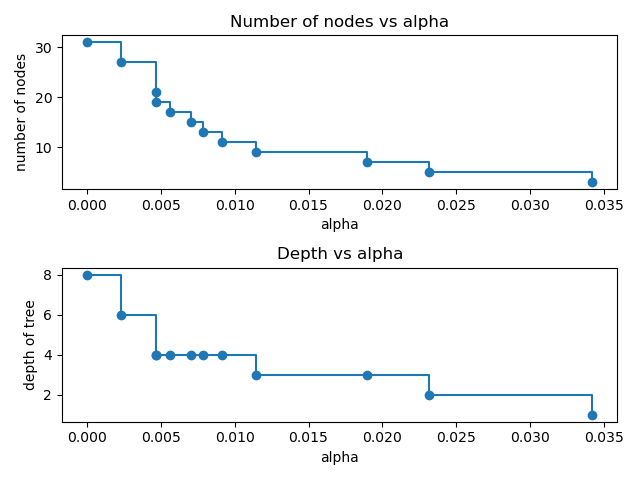

在本例的其余部分,我们删除clfs和ccp_alphas中的最后一个元素,因为它只有一个节点的平凡树。在这里,我们展示了随着alpha的增加,节点数和树的深度如何减少。

clfs = clfs[:-1]

ccp_alphas = ccp_alphas[:-1]

node_counts = [clf.tree_.node_count for clf in clfs]

depth = [clf.tree_.max_depth for clf in clfs]

fig, ax = plt.subplots(2, 1)

ax[0].plot(ccp_alphas, node_counts, marker="o", drawstyle="steps-post")

ax[0].set_xlabel("alpha")

ax[0].set_ylabel("number of nodes")

ax[0].set_title("Number of nodes vs alpha")

ax[1].plot(ccp_alphas, depth, marker="o", drawstyle="steps-post")

ax[1].set_xlabel("alpha")

ax[1].set_ylabel("depth of tree")

ax[1].set_title("Depth vs alpha")

fig.tight_layout()

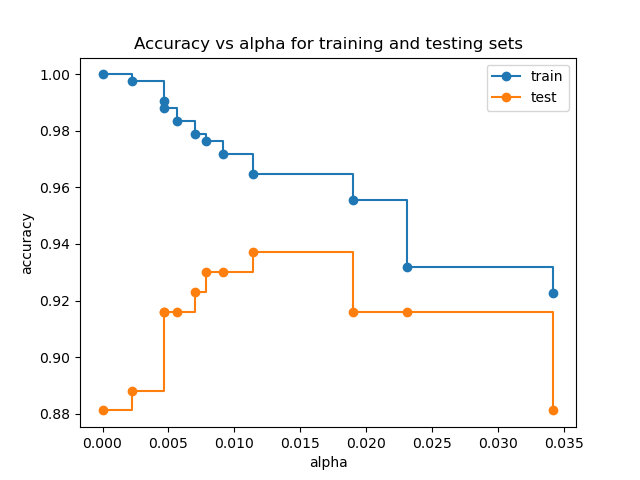

训练集和测试集的准确率与alpha值的关系#

当ccp_alpha设置为零并保持DecisionTreeClassifier的其他默认参数时,树会过拟合,导致训练准确率为100%,测试准确率为88%。随着alpha的增加,更多的树被剪枝,从而创建了一个泛化能力更好的决策树。在本例中,设置ccp_alpha=0.015可以最大化测试准确率。

train_scores = [clf.score(X_train, y_train) for clf in clfs]

test_scores = [clf.score(X_test, y_test) for clf in clfs]

fig, ax = plt.subplots()

ax.set_xlabel("alpha")

ax.set_ylabel("accuracy")

ax.set_title("Accuracy vs alpha for training and testing sets")

ax.plot(ccp_alphas, train_scores, marker="o", label="train", drawstyle="steps-post")

ax.plot(ccp_alphas, test_scores, marker="o", label="test", drawstyle="steps-post")

ax.legend()

plt.show()

脚本总运行时间:(0分钟0.455秒)

相关示例