MiniBatchKMeans#

- class sklearn.cluster.MiniBatchKMeans(n_clusters=8, *, init='k-means++', max_iter=100, batch_size=1024, verbose=0, compute_labels=True, random_state=None, tol=0.0, max_no_improvement=10, init_size=None, n_init='auto', reassignment_ratio=0.01)[source]#

Mini-Batch K-Means 聚类。

Read more in the User Guide.

- 参数:

- n_clustersint, default=8

The number of clusters to form as well as the number of centroids to generate.

- init{‘k-means++’, ‘random’}, callable or array-like of shape (n_clusters, n_features), default=’k-means++’

Method for initialization

‘k-means++’ : selects initial cluster centroids using sampling based on an empirical probability distribution of the points’ contribution to the overall inertia. This technique speeds up convergence. The algorithm implemented is “greedy k-means++”. It differs from the vanilla k-means++ by making several trials at each sampling step and choosing the best centroid among them.

‘random’: choose

n_clustersobservations (rows) at random from data for the initial centroids.If an array is passed, it should be of shape (n_clusters, n_features) and gives the initial centers.

If a callable is passed, it should take arguments X, n_clusters and a random state and return an initialization.

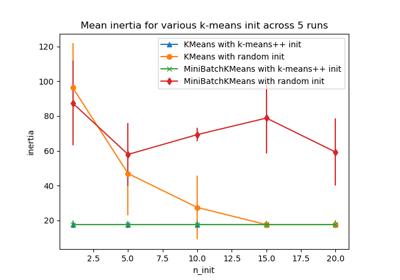

For an evaluation of the impact of initialization, see the example Empirical evaluation of the impact of k-means initialization.

- max_iterint, default=100

Maximum number of iterations over the complete dataset before stopping independently of any early stopping criterion heuristics.

- batch_sizeint, default=1024

Size of the mini batches. For faster computations, you can set

batch_size > 256 * number_of_coresto enable parallelism on all cores.Changed in version 1.0:

batch_sizedefault changed from 100 to 1024.- verboseint, default=0

Verbosity mode.

- compute_labelsbool, default=True

Compute label assignment and inertia for the complete dataset once the minibatch optimization has converged in fit.

- random_stateint, RandomState instance or None, default=None

Determines random number generation for centroid initialization and random reassignment. Use an int to make the randomness deterministic. See Glossary.

- tolfloat, default=0.0

Control early stopping based on the relative center changes as measured by a smoothed, variance-normalized of the mean center squared position changes. This early stopping heuristics is closer to the one used for the batch variant of the algorithms but induces a slight computational and memory overhead over the inertia heuristic.

To disable convergence detection based on normalized center change, set tol to 0.0 (default).

- max_no_improvementint, default=10

Control early stopping based on the consecutive number of mini batches that does not yield an improvement on the smoothed inertia.

To disable convergence detection based on inertia, set max_no_improvement to None.

- init_sizeint, default=None

Number of samples to randomly sample for speeding up the initialization (sometimes at the expense of accuracy): the only algorithm is initialized by running a batch KMeans on a random subset of the data. This needs to be larger than n_clusters.

If

None, the heuristic isinit_size = 3 * batch_sizeif3 * batch_size < n_clusters, elseinit_size = 3 * n_clusters.- n_init‘auto’ or int, default=”auto”

Number of random initializations that are tried. In contrast to KMeans, the algorithm is only run once, using the best of the

n_initinitializations as measured by inertia. Several runs are recommended for sparse high-dimensional problems (see Clustering sparse data with k-means).When

n_init='auto', the number of runs depends on the value of init: 3 if usinginit='random'orinitis a callable; 1 if usinginit='k-means++'orinitis an array-like.Added in version 1.2: Added ‘auto’ option for

n_init.Changed in version 1.4: Default value for

n_initchanged to'auto'in version.- reassignment_ratiofloat, default=0.01

Control the fraction of the maximum number of counts for a center to be reassigned. A higher value means that low count centers are more easily reassigned, which means that the model will take longer to converge, but should converge in a better clustering. However, too high a value may cause convergence issues, especially with a small batch size.

- 属性:

- cluster_centers_ndarray of shape (n_clusters, n_features)

Coordinates of cluster centers.

- labels_ndarray of shape (n_samples,)

Labels of each point (if compute_labels is set to True).

- inertia_float

The value of the inertia criterion associated with the chosen partition if compute_labels is set to True. If compute_labels is set to False, it’s an approximation of the inertia based on an exponentially weighted average of the batch inertiae. The inertia is defined as the sum of square distances of samples to their cluster center, weighted by the sample weights if provided.

- n_iter_int

Number of iterations over the full dataset.

- n_steps_int

Number of minibatches processed.

1.0 版本新增。

- n_features_in_int

在 拟合 期间看到的特征数。

0.24 版本新增。

- feature_names_in_shape 为 (

n_features_in_,) 的 ndarray 在 fit 期间看到的特征名称。仅当

X具有全部为字符串的特征名称时才定义。1.0 版本新增。

另请参阅

KMeansThe classic implementation of the clustering method based on the Lloyd’s algorithm. It consumes the whole set of input data at each iteration.

注意事项

See https://www.eecs.tufts.edu/~dsculley/papers/fastkmeans.pdf

When there are too few points in the dataset, some centers may be duplicated, which means that a proper clustering in terms of the number of requesting clusters and the number of returned clusters will not always match. One solution is to set

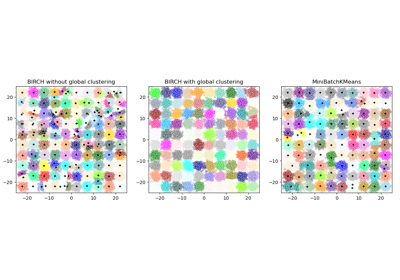

reassignment_ratio=0, which prevents reassignments of clusters that are too small.See Compare BIRCH and MiniBatchKMeans for a comparison with

BIRCH.示例

>>> from sklearn.cluster import MiniBatchKMeans >>> import numpy as np >>> X = np.array([[1, 2], [1, 4], [1, 0], ... [4, 2], [4, 0], [4, 4], ... [4, 5], [0, 1], [2, 2], ... [3, 2], [5, 5], [1, -1]]) >>> # manually fit on batches >>> kmeans = MiniBatchKMeans(n_clusters=2, ... random_state=0, ... batch_size=6, ... n_init="auto") >>> kmeans = kmeans.partial_fit(X[0:6,:]) >>> kmeans = kmeans.partial_fit(X[6:12,:]) >>> kmeans.cluster_centers_ array([[3.375, 3. ], [0.75 , 0.5 ]]) >>> kmeans.predict([[0, 0], [4, 4]]) array([1, 0], dtype=int32) >>> # fit on the whole data >>> kmeans = MiniBatchKMeans(n_clusters=2, ... random_state=0, ... batch_size=6, ... max_iter=10, ... n_init="auto").fit(X) >>> kmeans.cluster_centers_ array([[3.55102041, 2.48979592], [1.06896552, 1. ]]) >>> kmeans.predict([[0, 0], [4, 4]]) array([1, 0], dtype=int32)

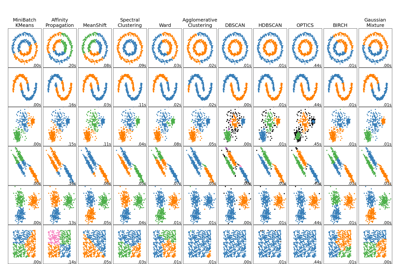

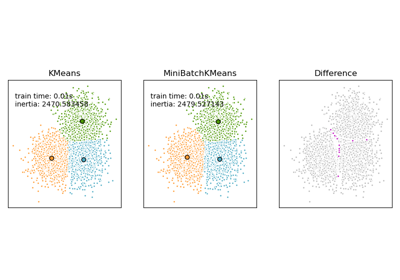

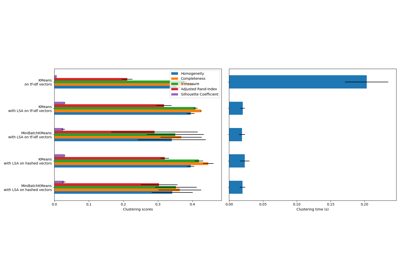

For a comparison of Mini-Batch K-Means clustering with other clustering algorithms, see Comparing different clustering algorithms on toy datasets

- fit(X, y=None, sample_weight=None)[source]#

Compute the centroids on X by chunking it into mini-batches.

- 参数:

- Xshape 为 (n_samples, n_features) 的 {array-like, sparse matrix}

Training instances to cluster. It must be noted that the data will be converted to C ordering, which will cause a memory copy if the given data is not C-contiguous. If a sparse matrix is passed, a copy will be made if it’s not in CSR format.

- y被忽略

Not used, present here for API consistency by convention.

- sample_weightshape 为 (n_samples,) 的 array-like, default=None

The weights for each observation in X. If None, all observations are assigned equal weight.

sample_weightis not used during initialization ifinitis a callable or a user provided array.0.20 版本新增。

- 返回:

- selfobject

拟合的估计器。

- fit_predict(X, y=None, sample_weight=None)[source]#

Compute cluster centers and predict cluster index for each sample.

Convenience method; equivalent to calling fit(X) followed by predict(X).

- 参数:

- Xshape 为 (n_samples, n_features) 的 {array-like, sparse matrix}

New data to transform.

- y被忽略

Not used, present here for API consistency by convention.

- sample_weightshape 为 (n_samples,) 的 array-like, default=None

The weights for each observation in X. If None, all observations are assigned equal weight.

- 返回:

- labelsndarray of shape (n_samples,)

Index of the cluster each sample belongs to.

- fit_transform(X, y=None, sample_weight=None)[source]#

Compute clustering and transform X to cluster-distance space.

Equivalent to fit(X).transform(X), but more efficiently implemented.

- 参数:

- Xshape 为 (n_samples, n_features) 的 {array-like, sparse matrix}

New data to transform.

- y被忽略

Not used, present here for API consistency by convention.

- sample_weightshape 为 (n_samples,) 的 array-like, default=None

The weights for each observation in X. If None, all observations are assigned equal weight.

- 返回:

- X_newndarray of shape (n_samples, n_clusters)

X transformed in the new space.

- get_feature_names_out(input_features=None)[source]#

获取转换的输出特征名称。

The feature names out will prefixed by the lowercased class name. For example, if the transformer outputs 3 features, then the feature names out are:

["class_name0", "class_name1", "class_name2"].- 参数:

- input_featuresarray-like of str or None, default=None

Only used to validate feature names with the names seen in

fit.

- 返回:

- feature_names_outstr 对象的 ndarray

转换后的特征名称。

- get_metadata_routing()[source]#

获取此对象的元数据路由。

请查阅 用户指南,了解路由机制如何工作。

- 返回:

- routingMetadataRequest

封装路由信息的

MetadataRequest。

- get_params(deep=True)[source]#

获取此估计器的参数。

- 参数:

- deepbool, default=True

如果为 True,将返回此估计器以及包含的子对象(如果它们是估计器)的参数。

- 返回:

- paramsdict

参数名称映射到其值。

- partial_fit(X, y=None, sample_weight=None)[source]#

Update k means estimate on a single mini-batch X.

- 参数:

- Xshape 为 (n_samples, n_features) 的 {array-like, sparse matrix}

Training instances to cluster. It must be noted that the data will be converted to C ordering, which will cause a memory copy if the given data is not C-contiguous. If a sparse matrix is passed, a copy will be made if it’s not in CSR format.

- y被忽略

Not used, present here for API consistency by convention.

- sample_weightshape 为 (n_samples,) 的 array-like, default=None

The weights for each observation in X. If None, all observations are assigned equal weight.

sample_weightis not used during initialization ifinitis a callable or a user provided array.

- 返回:

- selfobject

Return updated estimator.

- predict(X)[source]#

Predict the closest cluster each sample in X belongs to.

In the vector quantization literature,

cluster_centers_is called the code book and each value returned bypredictis the index of the closest code in the code book.- 参数:

- Xshape 为 (n_samples, n_features) 的 {array-like, sparse matrix}

New data to predict.

- 返回:

- labelsndarray of shape (n_samples,)

Index of the cluster each sample belongs to.

- score(X, y=None, sample_weight=None)[source]#

Opposite of the value of X on the K-means objective.

- 参数:

- Xshape 为 (n_samples, n_features) 的 {array-like, sparse matrix}

New data.

- y被忽略

Not used, present here for API consistency by convention.

- sample_weightshape 为 (n_samples,) 的 array-like, default=None

The weights for each observation in X. If None, all observations are assigned equal weight.

- 返回:

- scorefloat

Opposite of the value of X on the K-means objective.

- set_fit_request(*, sample_weight: bool | None | str = '$UNCHANGED$') MiniBatchKMeans[source]#

配置是否应请求元数据以传递给

fit方法。请注意,此方法仅在以下情况下相关:此估计器用作 元估计器 中的子估计器,并且通过

enable_metadata_routing=True启用了元数据路由(请参阅sklearn.set_config)。请查看 用户指南 以了解路由机制的工作原理。每个参数的选项如下:

True:请求元数据,如果提供则传递给fit。如果未提供元数据,则忽略该请求。False:不请求元数据,元估计器不会将其传递给fit。None:不请求元数据,如果用户提供元数据,元估计器将引发错误。str:应将元数据以给定别名而不是原始名称传递给元估计器。

默认值 (

sklearn.utils.metadata_routing.UNCHANGED) 保留现有请求。这允许您更改某些参数的请求而不更改其他参数。在版本 1.3 中新增。

- 参数:

- sample_weightstr, True, False, or None, default=sklearn.utils.metadata_routing.UNCHANGED

fit方法中sample_weight参数的元数据路由。

- 返回:

- selfobject

更新后的对象。

- set_output(*, transform=None)[source]#

设置输出容器。

有关如何使用 API 的示例,请参阅引入 set_output API。

- 参数:

- transform{“default”, “pandas”, “polars”}, default=None

配置

transform和fit_transform的输出。"default": 转换器的默认输出格式"pandas": DataFrame 输出"polars": Polars 输出None: 转换配置保持不变

1.4 版本新增: 添加了

"polars"选项。

- 返回:

- selfestimator instance

估计器实例。

- set_params(**params)[source]#

设置此估计器的参数。

此方法适用于简单的估计器以及嵌套对象(如

Pipeline)。后者具有<component>__<parameter>形式的参数,以便可以更新嵌套对象的每个组件。- 参数:

- **paramsdict

估计器参数。

- 返回:

- selfestimator instance

估计器实例。

- set_partial_fit_request(*, sample_weight: bool | None | str = '$UNCHANGED$') MiniBatchKMeans[source]#

Configure whether metadata should be requested to be passed to the

partial_fitmethod.请注意,此方法仅在以下情况下相关:此估计器用作 元估计器 中的子估计器,并且通过

enable_metadata_routing=True启用了元数据路由(请参阅sklearn.set_config)。请查看 用户指南 以了解路由机制的工作原理。每个参数的选项如下:

True: metadata is requested, and passed topartial_fitif provided. The request is ignored if metadata is not provided.False: metadata is not requested and the meta-estimator will not pass it topartial_fit.None:不请求元数据,如果用户提供元数据,元估计器将引发错误。str:应将元数据以给定别名而不是原始名称传递给元估计器。

默认值 (

sklearn.utils.metadata_routing.UNCHANGED) 保留现有请求。这允许您更改某些参数的请求而不更改其他参数。在版本 1.3 中新增。

- 参数:

- sample_weightstr, True, False, or None, default=sklearn.utils.metadata_routing.UNCHANGED

Metadata routing for

sample_weightparameter inpartial_fit.

- 返回:

- selfobject

更新后的对象。

- set_score_request(*, sample_weight: bool | None | str = '$UNCHANGED$') MiniBatchKMeans[source]#

配置是否应请求元数据以传递给

score方法。请注意,此方法仅在以下情况下相关:此估计器用作 元估计器 中的子估计器,并且通过

enable_metadata_routing=True启用了元数据路由(请参阅sklearn.set_config)。请查看 用户指南 以了解路由机制的工作原理。每个参数的选项如下:

True:请求元数据,如果提供则传递给score。如果未提供元数据,则忽略该请求。False:不请求元数据,元估计器不会将其传递给score。None:不请求元数据,如果用户提供元数据,元估计器将引发错误。str:应将元数据以给定别名而不是原始名称传递给元估计器。

默认值 (

sklearn.utils.metadata_routing.UNCHANGED) 保留现有请求。这允许您更改某些参数的请求而不更改其他参数。在版本 1.3 中新增。

- 参数:

- sample_weightstr, True, False, or None, default=sklearn.utils.metadata_routing.UNCHANGED

score方法中sample_weight参数的元数据路由。

- 返回:

- selfobject

更新后的对象。

- transform(X)[source]#

Transform X to a cluster-distance space.

In the new space, each dimension is the distance to the cluster centers. Note that even if X is sparse, the array returned by

transformwill typically be dense.- 参数:

- Xshape 为 (n_samples, n_features) 的 {array-like, sparse matrix}

New data to transform.

- 返回:

- X_newndarray of shape (n_samples, n_clusters)

X transformed in the new space.