注意

转到末尾以下载完整示例代码或通过 JupyterLite 或 Binder 在浏览器中运行此示例。

多标签分类#

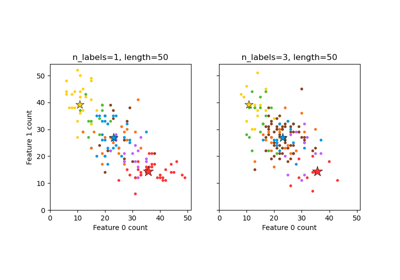

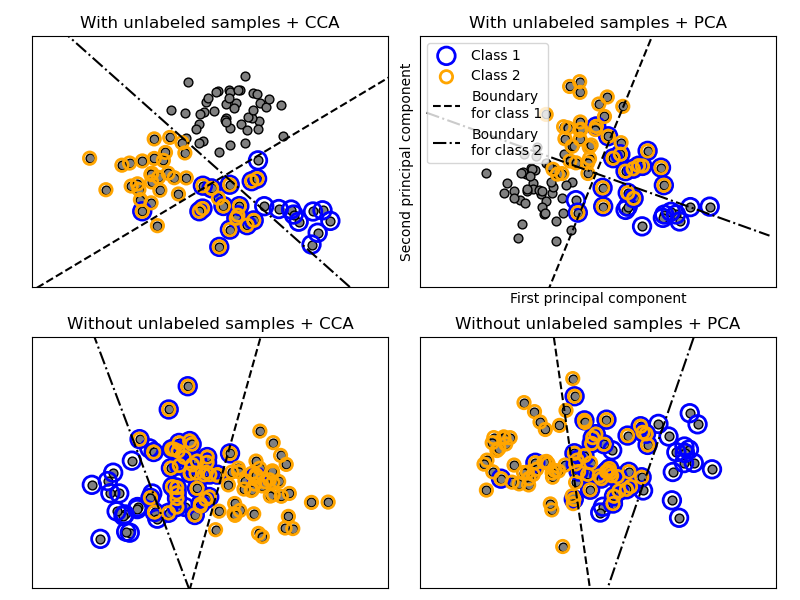

此示例模拟了一个多标签文档分类问题。数据集是根据以下过程随机生成的:

选择标签数量:n ~ Poisson(n_labels)

n 次,选择一个类别 c:c ~ Multinomial(theta)

选择文档长度:k ~ Poisson(length)

k 次,选择一个词 w:w ~ Multinomial(theta_c)

在上述过程中,使用拒绝采样来确保 n 大于 2,并且文档长度永远不为零。同样,我们拒绝已经被选择过的类别。被分配给两个类别的文档用两个彩色圆圈包围起来。

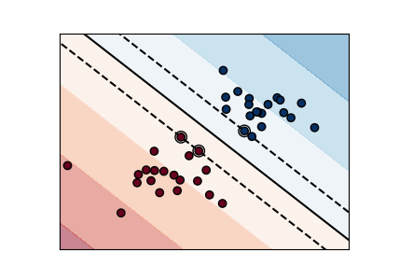

分类通过投影到 PCA 和 CCA 发现的前两个主成分进行可视化,然后使用 OneVsRestClassifier 元分类器,使用两个具有线性核的 SVC 来学习每个类别的判别模型。请注意,PCA 用于执行无监督降维,而 CCA 用于执行有监督降维。

注意:在图中,“未标记样本”并不意味着我们不知道标签(如在半监督学习中),而是指样本根本没有标签。

# Authors: The scikit-learn developers

# SPDX-License-Identifier: BSD-3-Clause

import matplotlib.pyplot as plt

import numpy as np

from sklearn.cross_decomposition import CCA

from sklearn.datasets import make_multilabel_classification

from sklearn.decomposition import PCA

from sklearn.multiclass import OneVsRestClassifier

from sklearn.svm import SVC

def plot_hyperplane(clf, min_x, max_x, linestyle, label):

# get the separating hyperplane

w = clf.coef_[0]

a = -w[0] / w[1]

xx = np.linspace(min_x - 5, max_x + 5) # make sure the line is long enough

yy = a * xx - (clf.intercept_[0]) / w[1]

plt.plot(xx, yy, linestyle, label=label)

def plot_subfigure(X, Y, subplot, title, transform):

if transform == "pca":

X = PCA(n_components=2).fit_transform(X)

elif transform == "cca":

X = CCA(n_components=2).fit(X, Y).transform(X)

else:

raise ValueError

min_x = np.min(X[:, 0])

max_x = np.max(X[:, 0])

min_y = np.min(X[:, 1])

max_y = np.max(X[:, 1])

classif = OneVsRestClassifier(SVC(kernel="linear"))

classif.fit(X, Y)

plt.subplot(2, 2, subplot)

plt.title(title)

zero_class = (Y[:, 0]).nonzero()

one_class = (Y[:, 1]).nonzero()

plt.scatter(X[:, 0], X[:, 1], s=40, c="gray", edgecolors=(0, 0, 0))

plt.scatter(

X[zero_class, 0],

X[zero_class, 1],

s=160,

edgecolors="b",

facecolors="none",

linewidths=2,

label="Class 1",

)

plt.scatter(

X[one_class, 0],

X[one_class, 1],

s=80,

edgecolors="orange",

facecolors="none",

linewidths=2,

label="Class 2",

)

plot_hyperplane(

classif.estimators_[0], min_x, max_x, "k--", "Boundary\nfor class 1"

)

plot_hyperplane(

classif.estimators_[1], min_x, max_x, "k-.", "Boundary\nfor class 2"

)

plt.xticks(())

plt.yticks(())

plt.xlim(min_x - 0.5 * max_x, max_x + 0.5 * max_x)

plt.ylim(min_y - 0.5 * max_y, max_y + 0.5 * max_y)

if subplot == 2:

plt.xlabel("First principal component")

plt.ylabel("Second principal component")

plt.legend(loc="upper left")

plt.figure(figsize=(8, 6))

X, Y = make_multilabel_classification(

n_classes=2, n_labels=1, allow_unlabeled=True, random_state=1

)

plot_subfigure(X, Y, 1, "With unlabeled samples + CCA", "cca")

plot_subfigure(X, Y, 2, "With unlabeled samples + PCA", "pca")

X, Y = make_multilabel_classification(

n_classes=2, n_labels=1, allow_unlabeled=False, random_state=1

)

plot_subfigure(X, Y, 3, "Without unlabeled samples + CCA", "cca")

plot_subfigure(X, Y, 4, "Without unlabeled samples + PCA", "pca")

plt.subplots_adjust(0.04, 0.02, 0.97, 0.94, 0.09, 0.2)

plt.show()

脚本总运行时间: (0 minutes 0.154 seconds)

相关示例